For software development and maintenance, contact me at contact@appsoftware.com or via appsoftware.com

What are Linear Regression and Multiple Linear Regression in AI?

Thu, 06 Apr 2023 by garethbrown

Linear Regression

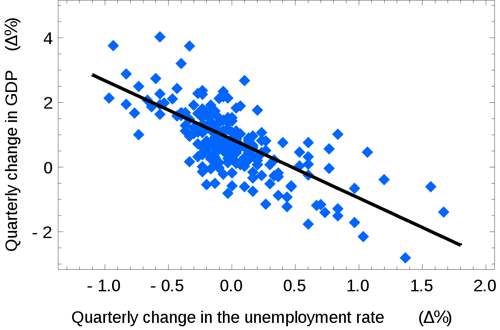

Linear regression is a statistical technique used to model the relationship between a dependent variable and one or more independent variables. The goal is to find a linear equation that best describes the relationship between these variables.

In simple linear regression, there is only one independent variable. The linear equation takes the form of y = mx + b, where y is the dependent variable, x is the independent variable, m is the slope of the line, and b is the y-intercept.

(Image credit Wikipedia)

The algorithm works by finding the values of m and b that minimize the difference between the predicted values of y and the actual values of y for a given set of x values. This difference is called the error or residual. The algorithm uses a method called ordinary least squares to minimize the sum of squared errors.

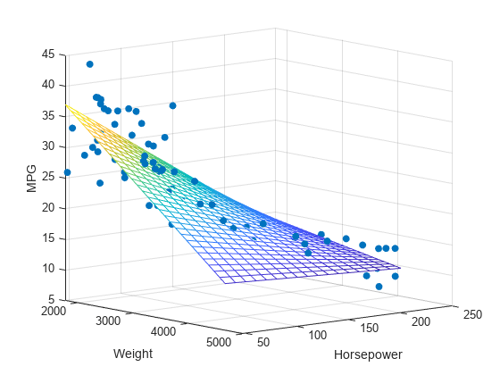

Multiple Linear Regression

In multiple linear regression, there are two or more independent variables. The linear equation takes the form of y = b0 + b1x1 + b2x2 + ... + bnxn, where b0 is the intercept and b1, b2, ..., bn are the slopes for the independent variables x1, x2, ..., xn.

(Image credit mathworks.com)

The algorithm works in a similar way as in simple linear regression, but instead of finding one slope and one intercept, it finds multiple slopes and one intercept. The goal is still to minimize the difference between the predicted values of y and the actual values of y for a given set of x values.

Once the algorithm has found the values of the coefficients, it can use them to make predictions for new values of the independent variable(s).

The use of any information, code samples, or product recommendations on this Website is entirely at your own risk, and we shall not be held liable for any loss or damage, direct or indirect, arising from or in connection with the use of this Website or the information provided herein.